Use Google Analytics for your Google Panda optimizations

Last week I visited the Search Marketing Thursday, a small and interesting meeting here in the Netherlands. A presentation about the Google Panda update led me to think about the PHP forum at finalwebsites.com. The Panda update is about quality content which people like, read and mention to others. I checked some older forum posts using Google Analytics and noticed that many of them are very short and the number of visitors is for several pages less than 2-3 in a month.

I began thinking about my webmaster forum and how many posts may be considered weak content under Google Panda and several of Google’s quality content guidelines, including:

- Is this article written by an expert or enthusiast who knows the topic well, or is it more shallow in nature?

- Does this article have spelling, stylistic, or factual errors?

- Does the page provide substantial value when compared to other pages in search results?

- Was the article edited well, or does it appear sloppy or hastily produced?

- Are the articles short, unsubstantial, or otherwise lacking in helpful specifics?

I selected these five “questions” because of the nature of user generated content in forums. The forum is free of spam, but often questions are very short or a topic is not very extended (missing discussions). Google has sent webmasters a clear message that they need to remove lower quality content to prevent a loss of search engine rankings during the next Google Panda Update.

Download the page view stats from Google Analytics

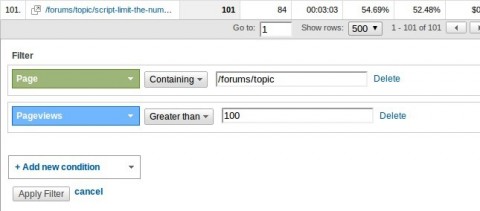

With the top content report in Google Analytics is it possible to filter all the pages with less visitors from a specific section using the advanced filter feature:

In my case I created a filter for my forum topic pages and selected only those with more than 100 visitors. I selected also the maximum number of rows to get a complete list. Doing this it was possible to export this data via Export -> CSV (near the upper left corner).

Next I opened the file in Excel and removed the upper rows to keep only those rows with the URLs and the page views. Next I saved the file under a new name (using a CSV format of course)

Joining the GA data with the records from our forum’s database

The following steps and code is only an example on how-to use that data for a website or application. In my case it’s the database from a bbPress forum, your own database structure and situation might be totally different!

According Google Analytics I have ~275 topic pages with more than 100 visitors and my current XML sitemap shows me total of ~400. With these numbers in mind I like to do the following:

- Keep all the topic records with more than 200 page views

- All other topics need to get reviewed

- Records not list in my Google Analytics report need to be disabled

The PHP Script

The script need to select all active topic URLs to compare them with the list from GA. First it opens the .csv file and stores the data in a two dimensional array, then it runs a query on the topic table and selects all active topic URLs. Using a loop it compares the .csv data with data from the topics table. For each comparison, the last two rules are used because the first rule doesn’t require an action. Additional information is entered inside the code snippet.

<?php

// add here here your MySQL connection

// the manual action to disable topic records

if (!empty($_GET['chst'])) {

mysql_query("UPDATE bb_topics SET topic_status = 9 WHERE topic_id = ".(int)$_GET['chst']);

}

// open the file and create an array

if (($handle = fopen("Analytics.csv", "r")) !== false) {

while (($data = fgetcsv($handle, 1000, "\t")) !== false) {

if ($data[0] != '\'Page\'') { // this is the row with headings

$u = trim($data[0], '\''); // Open Office created some quotes around the string value

$ga[$u] = $data[1]; // create pairs using the URL as array key

}

}

fclose($handle);

}

// get the topics from the database

$result = mysql_query("SELECT topic_id, topic_slug, topic_posts FROM bb_topics WHERE topic_status = 0 ORDER BY topic_posts");

$ct = 0; // just a simple counter value

echo '

<table>

<tr>

<th>Count</th>

<th>URL</th>

<th>Views</th>

<th>Posts</th>

<th> </th>

</tr>';

while ($r = mysql_fetch_assoc($result)) {

$url = '/myforum/topic/'.$r['topic_slug']; // according the URL format in GA

$views = (array_key_exists($url, $ga)) ? $ga[$url] : 0;

if ($views == 0) {

// set the topics on non-active

mysql_query("UPDATE bb_topics SET topic_status = 9 WHERE topic_slug = '".$r['topic_slug']."'");

} else {

if ($views < 200) { // we need to check those

echo '

<tr>

<td>'.$ct.'</td>

<td><a href="http://www.yourdomain.com'.$url.'">'.$url.'</a></td>

<td>'.$views.'</td>

<td>'.$r['topic_posts'].'</td>

<td><a href="myScript.php?chst='.$r['topic_id'].'">Change</a></td>

</tr>'; // note the change link, this is used for the some manual action

$ct++; // raise the counter value

}

}

}

echo '

</table>';

mysql_free_result($result);

?>Don’t store this PHP script in a public directory! A spider or bot can follow the “change” links and will remove your remaining URLs.

Stop, create a landing page first

Because I removed a lot of pages from my website I need to have some landing page with the information that the “disabled” page is not available anymore. Sure for the GoogleBot is a 301 redirect to the (section’s) homepage enough, but each “human” visitors deserves more.

After I created the landing page it’s time to run the script. I got a list of URLs with the number of page views. I checked every URL from that list and decided than to “deactivate” that page or not. In my case I removed ~150 pages with ZERO traffic and also ~150 pages with a tiny traffic and where the content is too thin or not relevant enough to keep those pages.

What’s next?

Write down which URLs are removed and monitor your remaining pages and optimize them if your bounce rate is high or the average time on the site is low. What are your tips to keep Google Panda from penalizing your site?

Published in: Search Engine Optimization